About

Piccolo embraces computing as a first-order principle in networked systems, aiming at a novel approach of integrating computing and networking, and to illustrate the approach in several use cases.

Taking the Next Step for Computing in the Network

Today, services are becoming ever-more dominated by large cloud service providers such as Google that run their own cloud/CDN infrastructure. We expect that, by default, emerging technologies such as cyber-physical connectivity, networked virtual reality, and data analytics will lead to further on-going centralisation of cloud and network infrastructure. This will limit the ability to innovate to the dominant cloud service providers. It also induces technical problems such as:

- Data privacy is at the mercy of a few big cloud operators whose operations are difficult to audit and control.

- High-volume sensing and the huge number of IoT devices lead to a “reverse Content Delivery Networking” problem, potentially exhausting the upload capacity.

- The advantage of low latency of next generation 5G networks is lost if requests have to travel deep into the cloud and back.

Companies wanting to deploy innovative distributed applications have a tough choice between staying local (clouds), relying on limited processing offered by CDNs (mostly static delivery) or deploying in public clouds with the associated privacy, latency and bandwidth limitations. Our belief is that Piccolo’s approach can alleviate these technical problems, reduce the centralisation and so allow a fresh wave of innovation at the edge (and open up the market to new entrants):

- Application back-ends are transformed from tightly knit and closely orchestrated deployments (“micro-management”) into an assembly of service components that run on a continuum of networking, computation and storage resources.

- The latency-critical parts of applications can run on Piccolo’s in-network computing, so removing the “time-of-flight” problem.

- Data can be filtered and anonymised before they are shipped back to the central cloud, which alleviates the data upload problem

- Privacy concerns can be further reduced by running data queries as a local application operating at the edge. Hence, the actual owner of the data gains control over who has access to what subsets of the data and/or what processed results.

- Long-term resource lock-down is replaced by agile dynamic function invocations guided by configurable policies (“management by objectives”) expressed by data owners, operators of managed services, and infrastructure carriers

Piccolo Scenarios

Piccolo is developing new concepts and mechanisms for in-network computing driven by application requirements from four main scenarios:

- Automotive Edge Computing

- In-Network Computing for Distributed Vision Processing

- Network Automation

- Smart Streetlights

Automotive Edge Computing

Cars are essentially being turned into platforms for distributed computing with many processors and significant amounts of generated and processed data, which introduces new use cases and requirements for programmability and shared access to data sources. Specific applications include:

-

Connected automated driving – providing real-time information about surrounding vehicles, obstacles, hazards, or highly detailed maps.

-

Predictive diagnostics – analysing the health of machine components and predicting potential measures for sustained operation and longer lifespan.

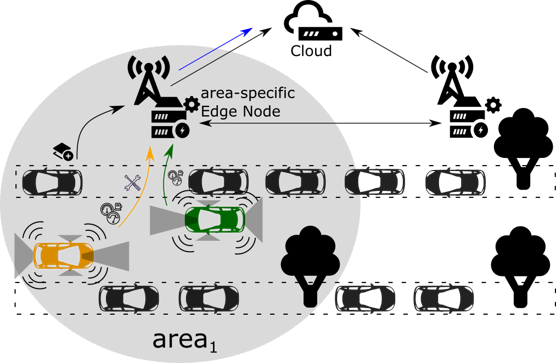

The figure above depicts a scenario where future cars can leverage external Piccolo platforms at the roadside infrastructure or telco access networks for outsourcing computation but also data storage and forwarding tasks. Legacy cyber-physical systems tend to push all the generated data to geographically clustered cloud backends. However, this is not feasible - and sometimes also not desirable - for several reasons:

- Capacity: Intel estimates that each car will generate 4,000 Gigabytes of data per day. Realtime transmission of all captured data is outside current cellular network dimensioning and would require literally astronomic investments. (A back-of-the envelope calculation shows that it would require a €8T global investment in network capacity to transport all data to the centre)

- Round-trip time: Reactive control loops (for which low-latency wireless access is designed) require processing close to the network access points.

- Data silos: If a car’s data is pulled into a car

manufacturer-operated cloud (instead of sensor data sharing close to

the source), some third-party applications are prevented from

entering that market, and the car owner faces being locked in to a

data silo.

- Privacy and data ownership: Data access based on a need-to-know principle would mandate that a car owner has fine-grained control over what is exposed, potentially imposing filtering of raw data and being able to revoke data access if necessary.

- Data context and structure: There is a broken information channel from the applications, which operate at the level of (shared) data structures, and the network which looks at raw bytes, leading to inefficient data copying and operations.

These concerns are addressed in Piccolo by including the first computational hop, that is the car, as an element of the platform. The car-resident part of Piccolo acts as a first fan-out point where on-car functions have shared access to sensory data on a par with edge- or even cloud-based data processing logic. For example, permanent monitoring and on-board data-analytics can be directly executed as part of certified in-vehicle functions and third-party applications. Further, instead of pushing all data to centralized cloud infrastructures, local information is primarily processed locally or in the vicinity (carto-edge as well as car-to-car). Access to the data can be under closer control, with visibility of different subsets (sometimes anonymised) granted to different parties, such as: the car’s automated driving system, the car’s manufacturer, the “driver”’s insurance company and traffic monitoring systems.

In-Network Computing for Distributed Vision Processing

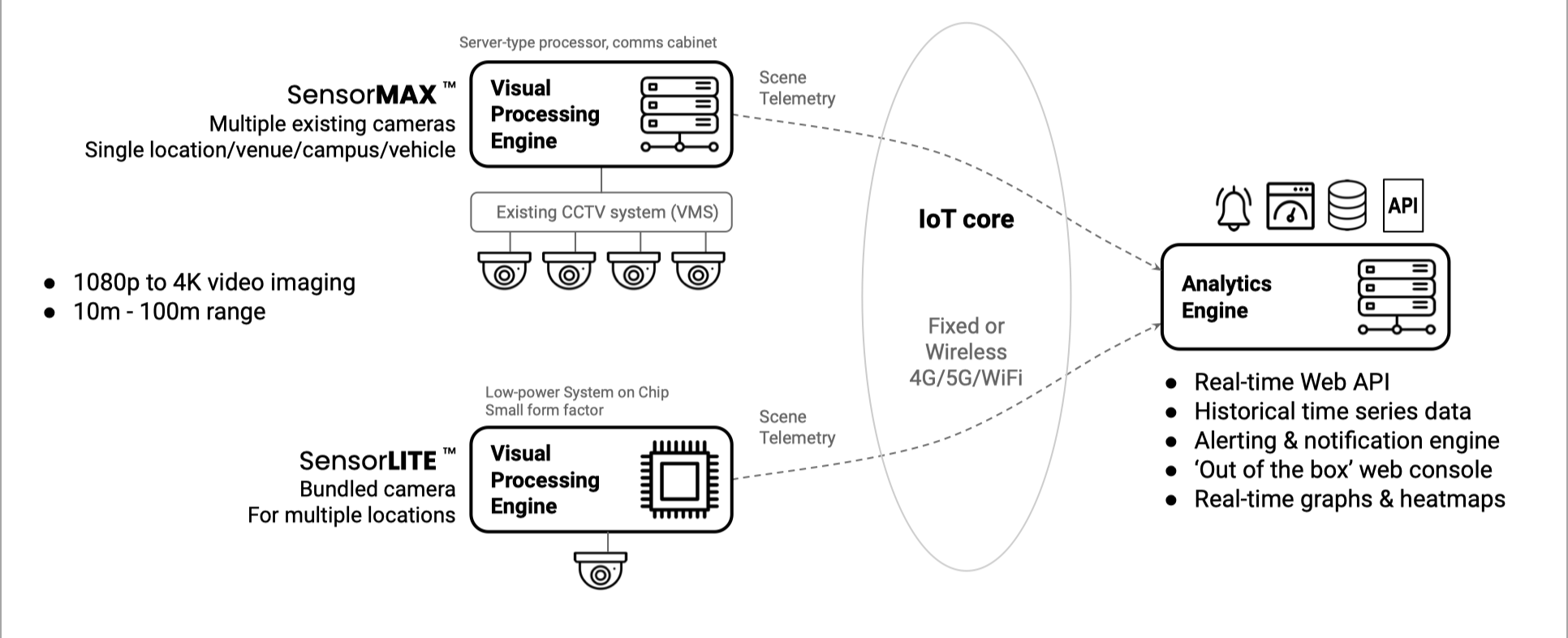

Recent years have seen an explosion in the use of Convolutional Neural Networks (CNNs) to solve computer vision tasks. This has been fuelled by a wave of academic research into ‘deep learning’ techniques giving rise to methods and models that can be transferred to the solving of complex analytics and detection problems of significant value to society, e.g. advanced detection and diagnosis of biomedical conditions, smarter and more autonomous cities and vehicles, smarter manufacturing, robotics, living environments and the analysis of human behavioural response for security and wellbeing.

The rise in commercial application and adoption of such techniques has been facilitated by a combination of factors including the growth in easy to use tools for training, tuning and deployment of neural networks, reduced cost of ownership of computing from cloud service providers, and the ever-reducing costs of high-resolution imaging, e.g. HD and 4K cameras.

Furthermore, increasing availability in low-cost and low-power System-on-Chip (SoC) and GPU systems has encouraged a rise in adoption of ‘AI at the edge’ architectures, driven principally by:

- Network capacity: the challenges and costs associated with transferring large volumes of high-resolution image data to the ‘classic’ cloud-based computing components.8

- Privacy, ethics & regulation: in cases where images involve human subjects, the attractiveness of avoiding the need to transfer and store ‘personal data’ through processing images immediately before being discarded and thereby upholding user privacy as enshrined in regulations like GDPR.

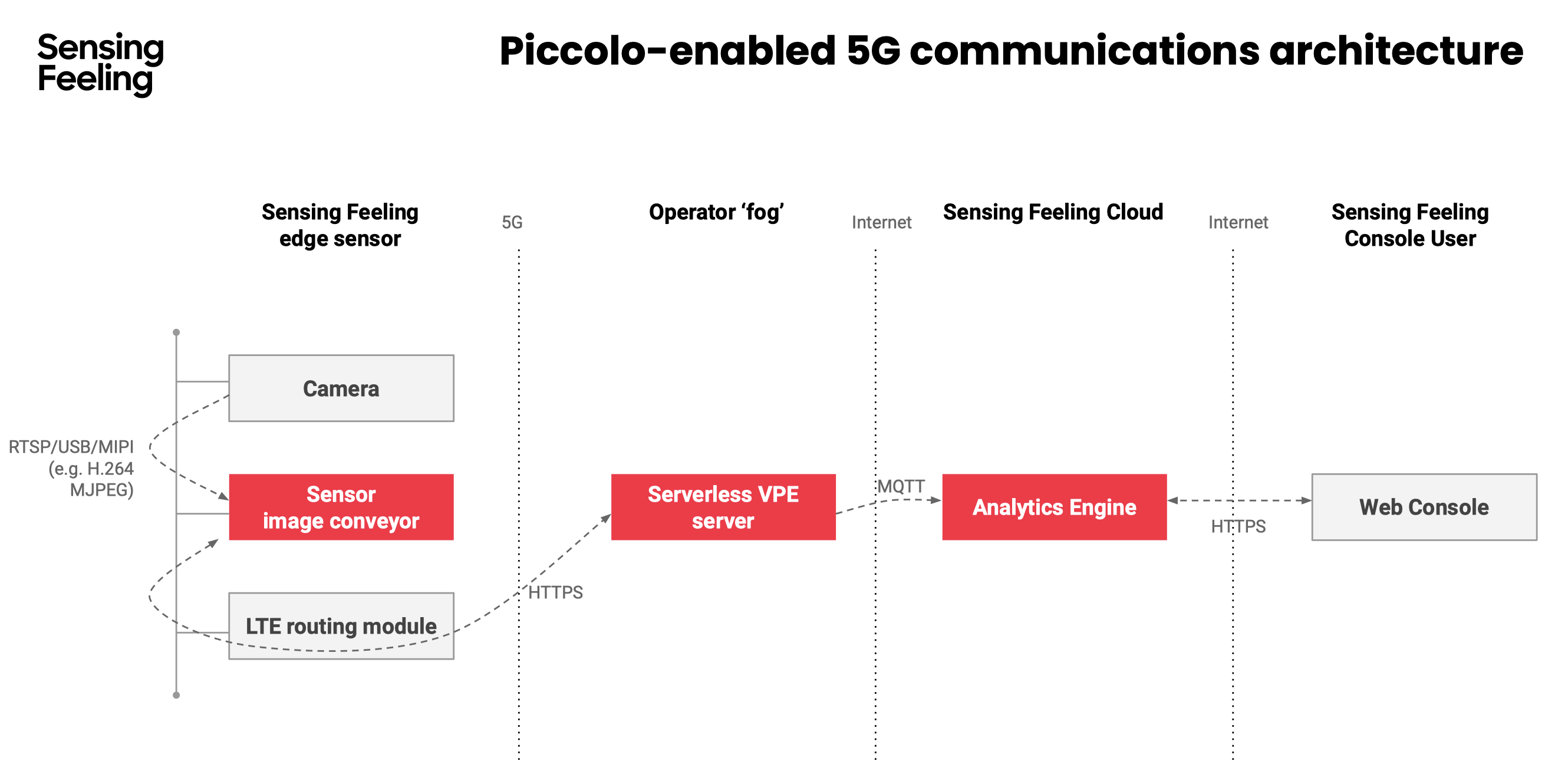

Piccolo partner Sensing Feeling’s current range of human behaviour and emotion-sensing products employs such an architecture; with the pre-trained deep-learning vision processing models deployed in a ‘containerised edge’ computing subsystem of multiple, independently-functioning vision sensors deployed in the customer’s physical environment (in multiple locations), all of which generate narrowband IoT-style telemetry to the cloud platform where the real-time data analytics and higher-order data processing takes place.

However, the Piccolo approach enables a more flexible architecture that opens up the possibility of more efficient technical and operational approaches and therefore the potential for wider, more pervasive, market applications.

Piccolo Approach

The Piccolo project will move the concepts and research results on in-network computing closer to application. Through the application development in WP1, we will validate concepts and platforms that will be developed and extended by the project. Specifically, the main innovations that will be available:

- Piccolo node execution environments that provide a trustable, secure and efficient platform for running fine-granular compute functions with adequate isolation and performance;

- Piccolo distributed computing software for different types of nodes (automotive platforms, edge nodes, cloud backends) that can distribute compute functions dynamically;

- Algorithms and implementations for the joint optimization of networking, computing and storage resources for Piccolo distributed computing;

- Control and orchestration systems that can implement different optimization goals and operator/user requirements, enabling Piccolo to leverage different types of network and compute infrastructure, including operated telco networks, enterprise infrastructure and roadside unit gateways;

- Applications from automotive edge computing and data offloading for vision processing that leverage the Piccolo distributed computing system and a heterogeneous set of underlying hardware platforms and execution environment; and

- Evaluation results for the different aspects of the system, including application development (usability, productivity), resource efficiency, resilience, scalability, and performance.